We know that Google likes original or unique content. While there is no penalty for duplicate content, there is a filter which filters out results that it deems are similar. Creating content is no easy feat and creating pages that are seen as unique while leveraging existing content is a critical component of cost effective SEO.

Particularly in an ecommerce business where product descriptions can be similar, it can be difficult to come up with original content for every single product and variation of it.

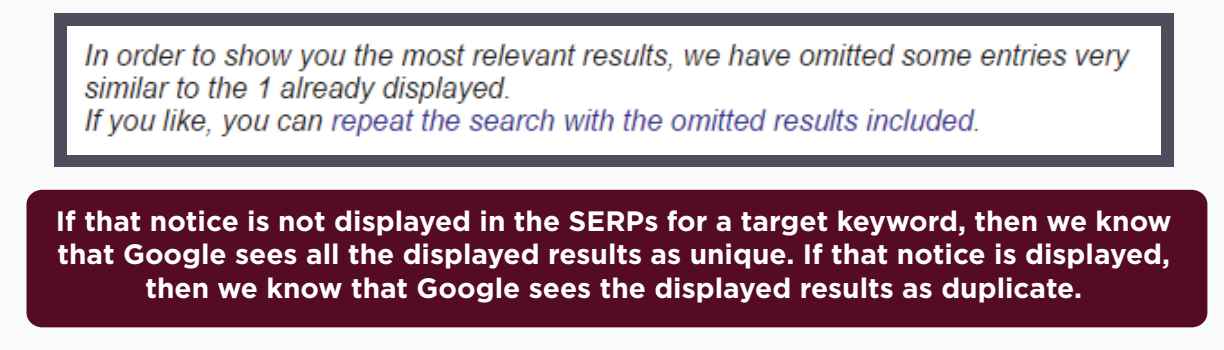

This test looked into the possibility of reusing and rewriting content, and making it appear as unique in the eyes of search engines. We tested, how much original information does a page need to be considered as unique?

Test Set-up

For this test, 5 tests were set up with different ratios of original content and duplicated content – the following ratios were tested :

50% unique : 50% duplicate

33% unique : 66% duplicate

40% unique : 60% duplicate

Test Results

Based on the 5 tests, we found out that there needs to be at least a 50:50 ratio of original content and duplicate content, for a page to be determined as unique and to pass through the filter. This is regardless of how many words are used in the content.

This is an important finding as it significantly lessens the burden of copy writing for ecommerce sites and for services in different locations.

Clint’s Feedback:

Clint discussed this test more into detail and shares his insights and recommendations on creating content using the information from this test. Check out the video below.

Test number 40 – How Much Original Text is Required for a Page to be Considered “Unique”?

I want to be clear here, first off, I am not talking about you have one location and then you open up three more locations so you open up three more location pages. In that case, you can actually use the exact same piece of content and just change the locations. Modify the page so that it fits to that location, Dallas vs Houston vs Boston, for example. That is totally fine, that is acceptable within the local business space in search because Google understands that A – a Plumber is a plumber and more often than not, you’re not going to talk about different things just because you have different businesses or different franchises across the country or whatever. So they expect to see pretty much the same thing. Totally fine.

This is a case where you want to use or leverage content that you’ve written for your money site, and you want to put it in different places and you want to be careful about not triggering the duplicate content algorithm.

So as an example, let’s say you’ve syndicated a full piece of your content onto a site that has more authority than you, your site gets taken off, and that authority site comes on. And that’s not what you want to do. You want to actually, write one piece of content, and then unique- fy it. I mean, that’s the word today, unique-ify that content, and put it on four or five different sites, and then rank all of those pages with that unique content, and take over a SERP search result. That’s probably the best way to leverage this, especially if you have access to multiple high authority sites that can allow you to pull that off in the market.

What you really need to know is what is unique? Like how much of my article do I have to change? And if you’ve ever watched my videos before, and it talks about uniqueness, 51% is pretty much the number that I go after and it is from this test.

Now, this test showed that 33% unique versus 66% duplicate, it was considered duplicate content by Google 40 to 60, was considered duplicate content by Google, it wasn’t till we hit 50-50, that was actually kind of considered unique. So that’s why I like to use the 51%. I’ve used that 51% recommendation ever since this test, and this test was in 2016. So that’s how long it’s still going on.

And it’s been a very effective policy for me. And really, when I use this the most is when I’m doing launch jacking stuff and you have a medium, and you have a couple other websites and your own website, you can actually jump three or four opportunities to get into the launch jack phase of SEO. That way, if one of those fails, you got a couple more to lean back on and get so you hit the 50/50 and you do the 51. All of those will get indexed are all unique, they all have great copy, they all lead to your shopping cart or your affiliate link, which is what you want them to do in the first place. So you can get some benefit out of it.

Typically, I wouldn’t even worry about this, if you’re on your own site. In that case, if you are targeting multiple pages on your own site for the same keyword, don’t do it any more than one copy or one additional page because the rest of those are going to get filtered out and more than likely you’re gonna end up filtering out the page that you really want to rank, for pages that come up later in a freshness perspective. So Google might get rid of those or if your SEO is better, or maybe even your keyword density is better. So typically, one keyword and one keyword cluster per page is where I leave it and you don’t want to make a whole bunch of those on your website for the same keywords or the same keyword clusters because you’ll inevitably create keyword cannibalization.

Again, this is really useful for if you want to create multiple pages on different domains, and takeover a SERP result, that’s when this is useful. Otherwise, for the most part, I think you’re pretty safe from duplicate content. There are instances where duplicate content actually gets you in trouble.

There are negative SEO campaigns that are created. They can buy a whole bunch of domains and then copy your content over and over and over again. And it’ll eventually remove, Google will remove you from this, especially if they link to it and Google will turn off or drop your page. They won’t necessarily rank the pages that are linking to you. As a matter of fact, as everyone that I’ve seen, they did not split, but they did devalue the site that was under the attack, in particular, their page that was under attack, even though you have canonical set, you’re doing all the right things, you can get these negative SEO campaign that ultimately just kind of hurts you in the long run.

So negative SEO is real. And they can use duplicate content to do it, and it is actually really crazy, surprisingly effective, regardless of what people tell you, that if it doesn’t have any effect. There is a negative effect to thi and it is something that Google has not adapted to because, frankly, it’s hard. Who do you punish and who gets the first rights, credits and all that stuff. It’s a little hard to figure out for Google. And so, that’s something that you as webmasters, business owners need to be cognitively cognizant of.

If you see rankings drops, check your link profiles and see what some people are duplicating your content and linking back to your page in some half hearted effort to create a mass site or something like that, that may be harming you in the long run.